F8: 2017 - Takeaways & Notes

Like every year now, Facebook has kicked of F8 with a plethora of new exciting announcements and product reveals, this year is no different. I flew over with my team to California to attend the keynote, here are some of my key takeaways and notes from the conference.

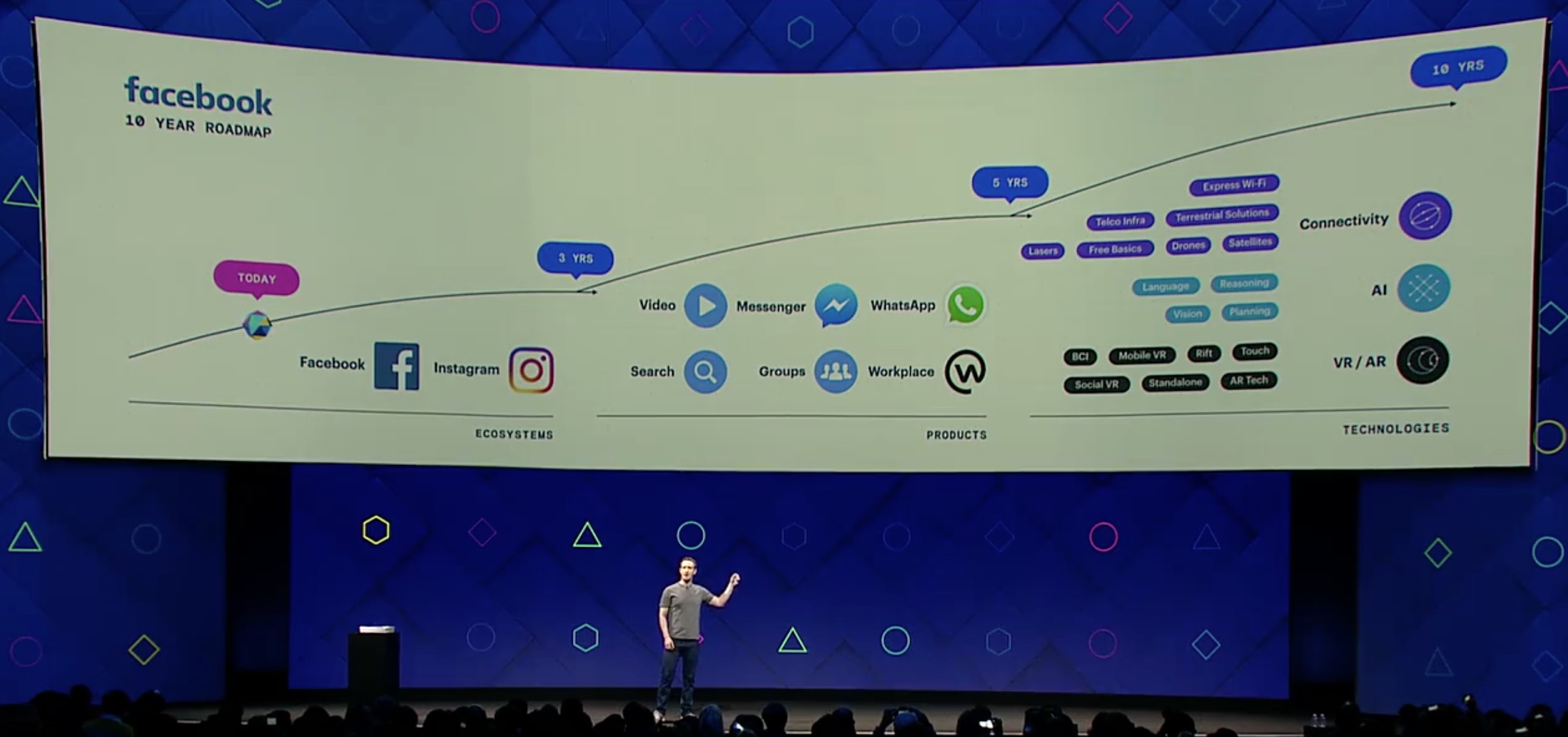

Mark Zuckerberg kicked it off by talking about the company's 10-year vision and the heavy focus on VR/AR:

Mark also dove deep into some cool AR announcements, launching a beta version of the AR Platform, which paves the way for the first AR Platform for mobile.

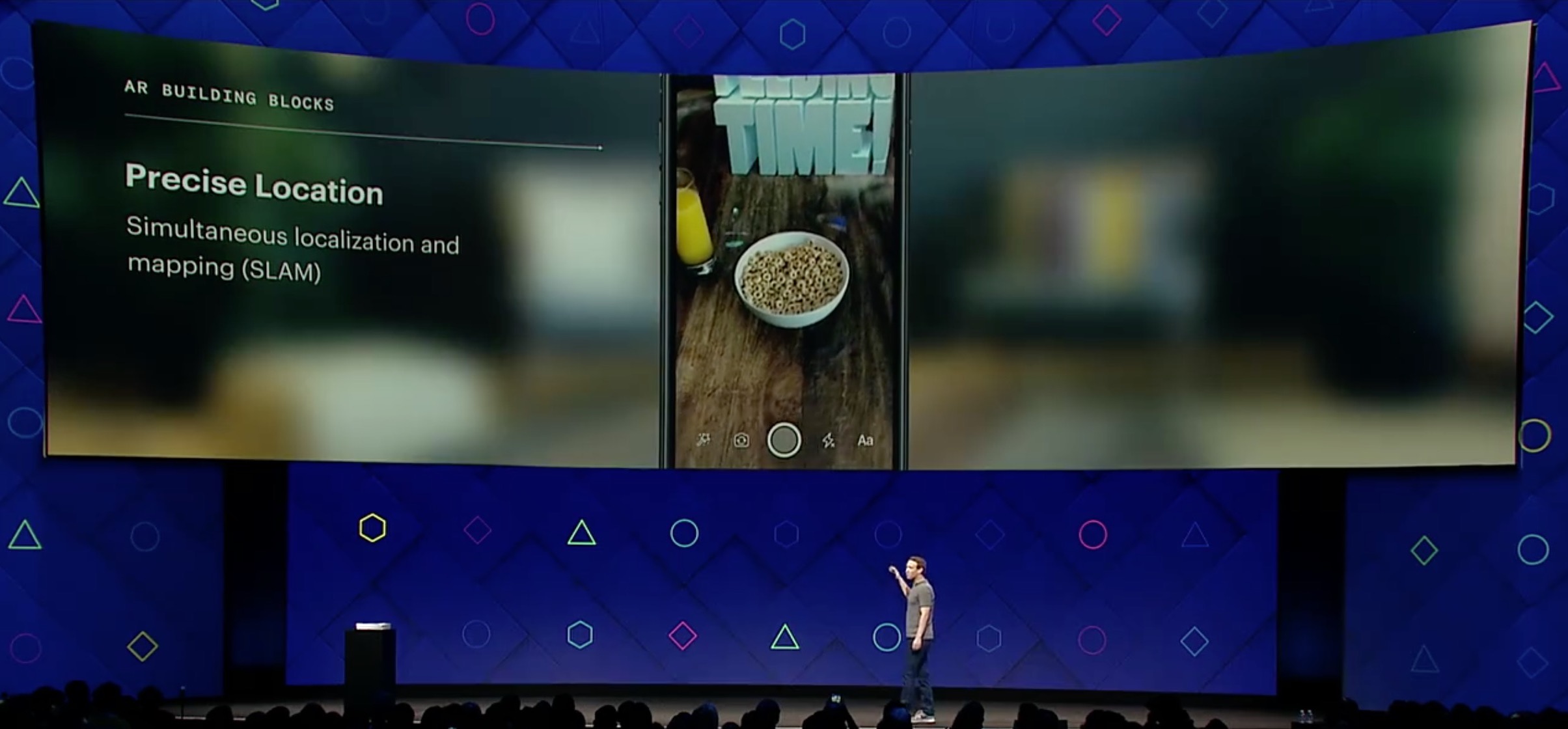

Using this technique called SLAM which maps virtual objects with precise location in the environment, creating a true feeling of augmented reality.

Using Object Recognition along with depth perception, taking a still photo can be turned into a true 3D scene, this can be useful in recreating old moments or childhood photos into a true 3D simulation.

One of the most interesting features that could be a reality soon is the sweet marriage between AR and Location. Imagine going up to a restaurant, pulling up your phone camera and seeing what your friends think about this place. Imagine leaving a message for our descendants in some foreign country and they can discover it years later (too much sci-fi). Of course the main bottleneck here would be pulling up your smartphone to do this action, which I will cover in a bit.

The Utopian vision of the future is having a truly augmented mixed reality with just simple glasses (that actually look normal) which can map anything into your real world. Be it a TV projection on your wall, a 3D game or even holograms of your friends so you can 3D-chat.

Going back to today's world, Mark states that we already have most of this technology in our smartphones' camera, so it makes perfect sense to start the AR platform there first.

Mark believes that the smartphone is eventually going to die, and that smartglasses will be the one replacing it, everything would be in mixed reality right in front of you. And the great thing about this is that the technology that Facebook is working on today, is building up and going to be the same one used in the smart glasses in the future, so it is definitely on the right track. This is actually what impressed me the most about the keynote, I believe that this vision (even though maybe far) is very probable, even though it might even be a different form factor, but I believe that the smartphone will be replaced sooner or later.

Just imagine your Facebook AR Glasses (with the help of facial recognition) can show you more information about a friend you've met before or tell you their name. Sounds creepy? It's the future.

However to reach this stage, there are a lot of scientifcal advancements that need to be met in order for this to happen, and here is why I think Facebook will be the first to do so:

- Optics & Displays: Oculus

- Interaction: Oculus

- Computer Vision: Facebook

- AI: Facebook AI Labs

- System Design

- UX: Facebook

The day will come, and it will completely change what we think about technology we do today.

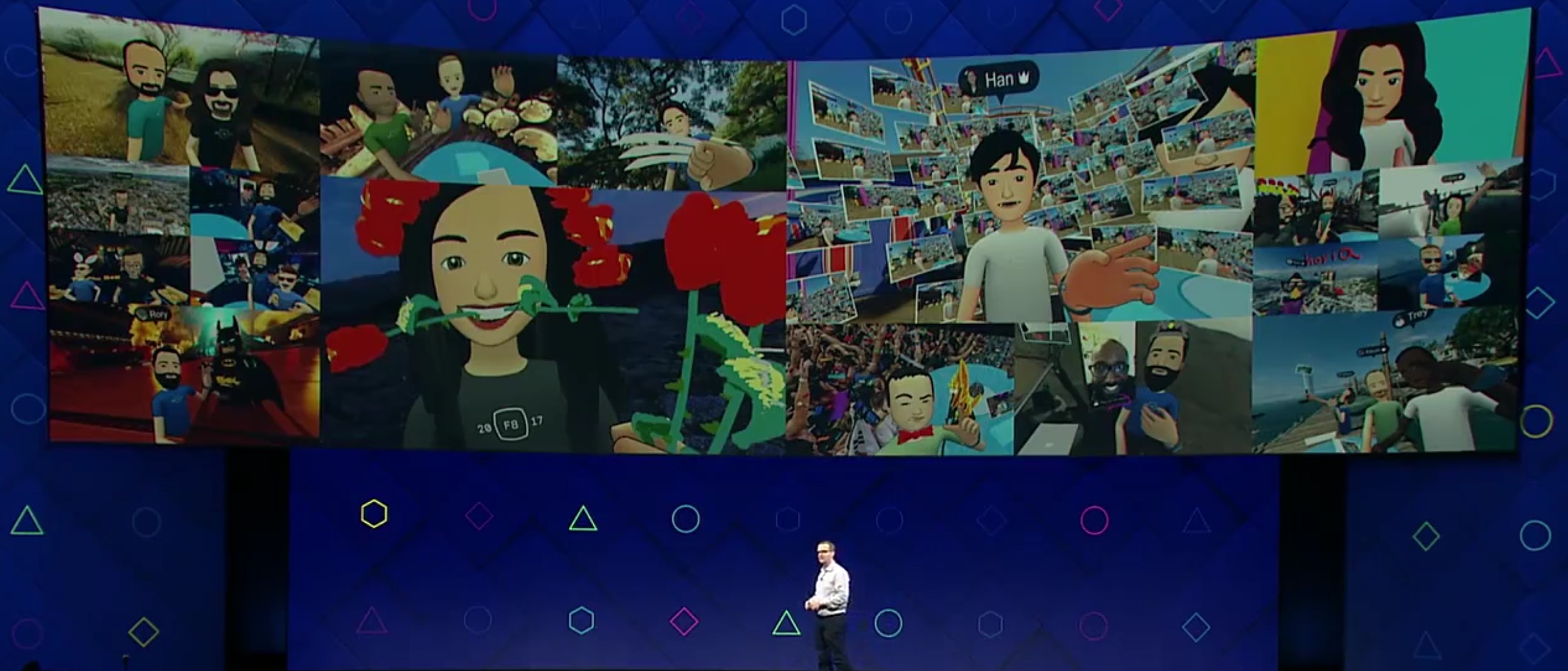

Virtual Reality is also in mind

Utilizing Oculus since its acquisition in 2014 was a good choice, Facebook is trying to pave the way for a full VR Facebook world in the future. It is still in its very young stages, but it's showing great progress year over year.

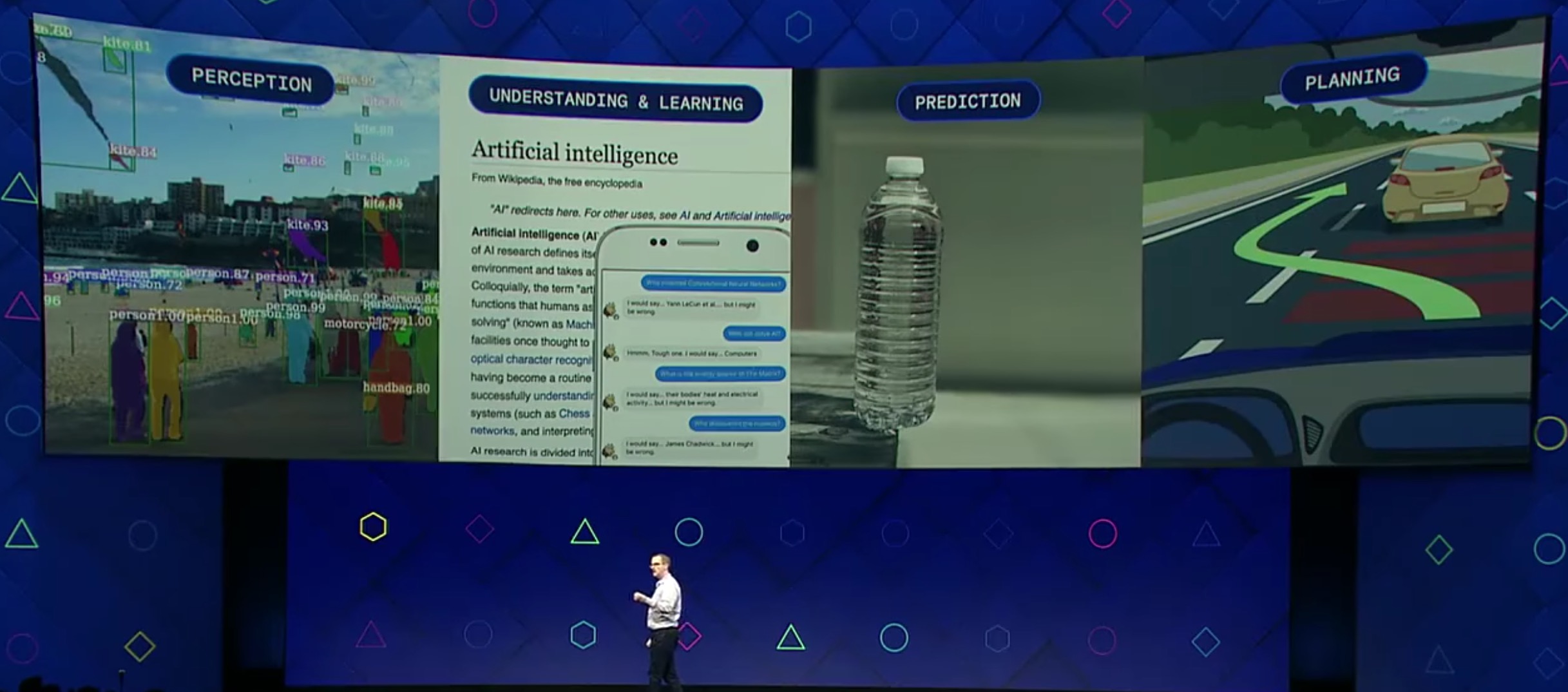

Future AI Goals in mind

AI Research at Facebook (and in the industry in general) is rapidly growing smarter and smarter everyday, one of the most important use cases right now is Prediction and Autonomous driving (like what Tesla is doing right now). I believe this is the most important field right now, making computers understand us is the next step in the technological revolution.

Free 360 Camera, WOO!

Facebook has also recently integrated 360 Videos in their platform, and as a result, they generously gifted everyone in the F8 Conference a free 360 Giroptic IO 360 camera!

3D 360' is the new 360

One of the more interesting subjects is how Facebook is investing in capturing 3-Dimensional 360 images, they have talked about their Surround360 24-array camera that aims to capture the full scene in 3D, which can utilize Oculus for example to move around the scene. They also demoed that -with the help of AI algorithms- it can also be done with a smartphone camera.

Imagine having to take a photo with your phone, and going back to re-live this moment in all of its 3D-glory. Now that's what I call a Live Photo, Apple.

Skin talks!

One of the interesting demos they also showed was the ability to use actuators on the body of a person to translate a sentence to the person without speech or vision, just using skin!

They placed these actuators which vibrate to a certain sequence which signal to her brain a specific object/verb she has learned. They have managed to reach 3 word sentences in just under one hour with ease. This is a very interesting advancement in the field of alternative senses.

In the following weeks I will post some more notes on individual talks which interested me the most. Thank you for reading.